Chat

Shahanur Islam Shagor

How We Built an Autonomous Drone Swarm That Works Without GPS

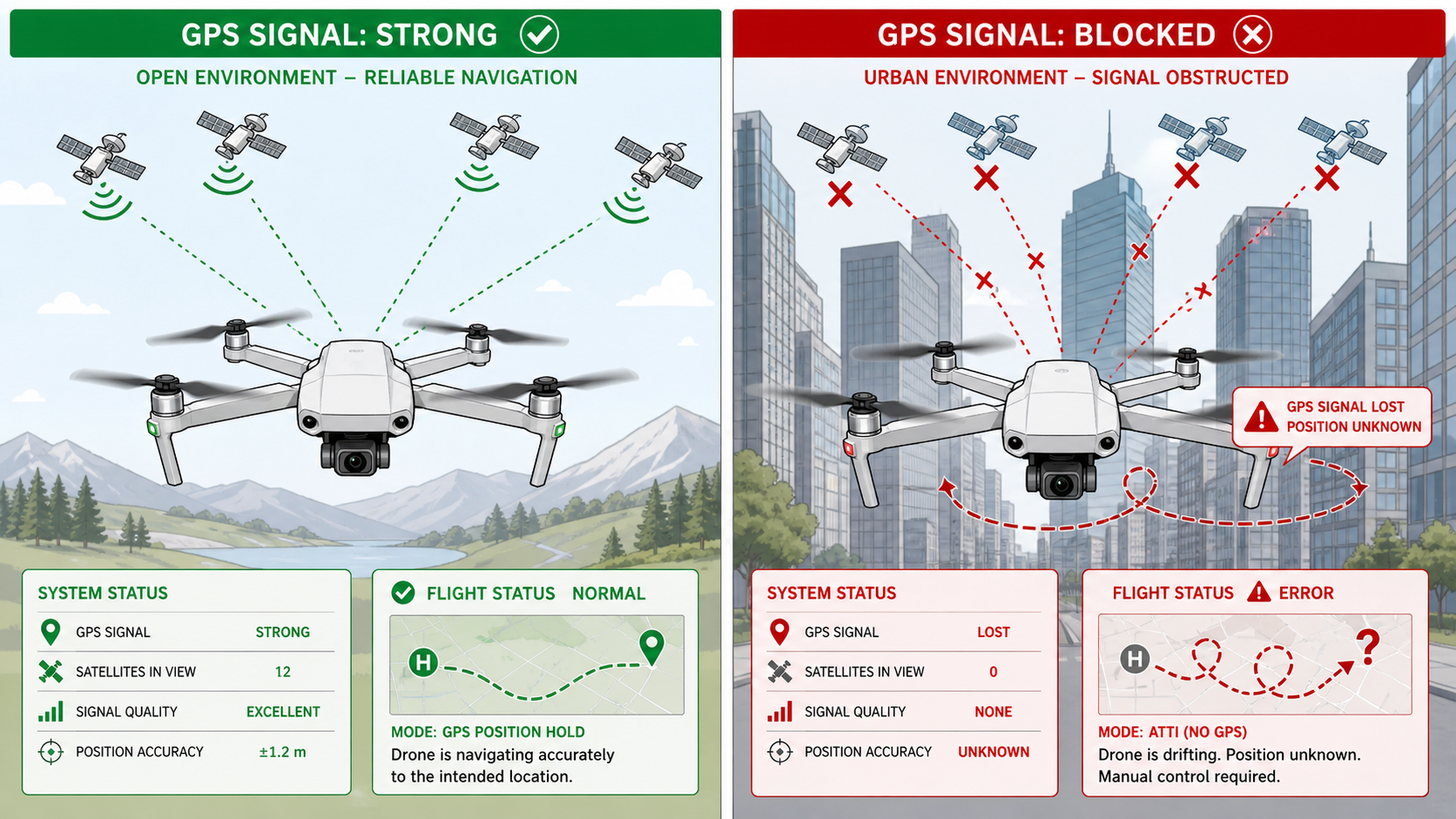

This infographic compares how drones perform with and without GPS signals. In open environments, strong satellite connections enable precise navigation and stable flight. In contrast, urban areas with tall buildings block GPS signals, causing loss of positioning, drifting, and flight errors highlighting the importance of GPS-denied navigation systems for reliable operation.

Article Details

Structured for reading, reference, and practical implementation takeaways

This article view follows the same polished long-form layout style as the other detail pages, while keeping the reading area focused and the supporting context easy to scan.

Imagine flying a fleet of drones through a dense urban canyon, underground tunnel, or hostile environment where GPS signals simply don't exist. Or picture a scenario where an adversary is actively jamming satellite signals, trying to ground your entire operation. For most commercial drone systems today, this would be game over. But what if your drones could navigate, coordinate, and complete their mission even when every satellite signal disappears?

That's exactly what we set out to build: a fully autonomous drone swarm system that doesn't just survive GPS denial it thrives in it.

The Problem: When GPS Fails, Everything Falls Apart

Let's be honest about something that the drone industry doesn't talk about enough: nearly every drone you can buy today is essentially helpless without GPS. Strip away those satellite signals, and even the most expensive commercial platforms start drifting, losing position awareness, and ultimately crashing or initiating emergency landings.

This isn't just a theoretical problem. GPS denial happens constantly in the real world:

Urban environments create what engineers call "urban canyons"—tall buildings that block satellite signals just like mountain valleys block sunlight. Try flying a GPS-dependent drone through downtown Manhattan or Hong Kong, and you'll quickly discover how unreliable satellite positioning becomes.

Indoor operations are completely off-limits for traditional drones. Warehouses, factories, underground infrastructure, cave systems—any enclosed space immediately eliminates GPS as an option.

Electronic warfare is the elephant in the room that defense applications can't ignore. Modern jamming equipment can deny GPS across entire regions for pennies on the dollar compared to the cost of the systems they're disrupting. In contested environments, assuming GPS availability is assuming defeat.

Natural interference from atmospheric conditions, solar activity, and terrain features can degrade or eliminate GPS accuracy even in otherwise open areas.

The industry's dirty secret? Most "autonomous" drones are actually just remotely piloted vehicles with some GPS-waypoint following capabilities. Remove the satellite signals, and the autonomy disappears.

We decided to build something different.

The Vision: True Autonomy in GPS-Denied Environments

Our goal wasn't incremental improvement. We wanted to build a system that could:

Navigate accurately without any external positioning infrastructure

Coordinate multiple drones as a unified swarm

Maintain formation while avoiding obstacles

Recover from complete position loss

Operate securely in adversarial conditions

Scale from single drones to large fleets

And we wanted it to work not just in carefully controlled laboratory environments, but in real-world conditions with dynamic obstacles, varying lighting, weather interference, and communication constraints.

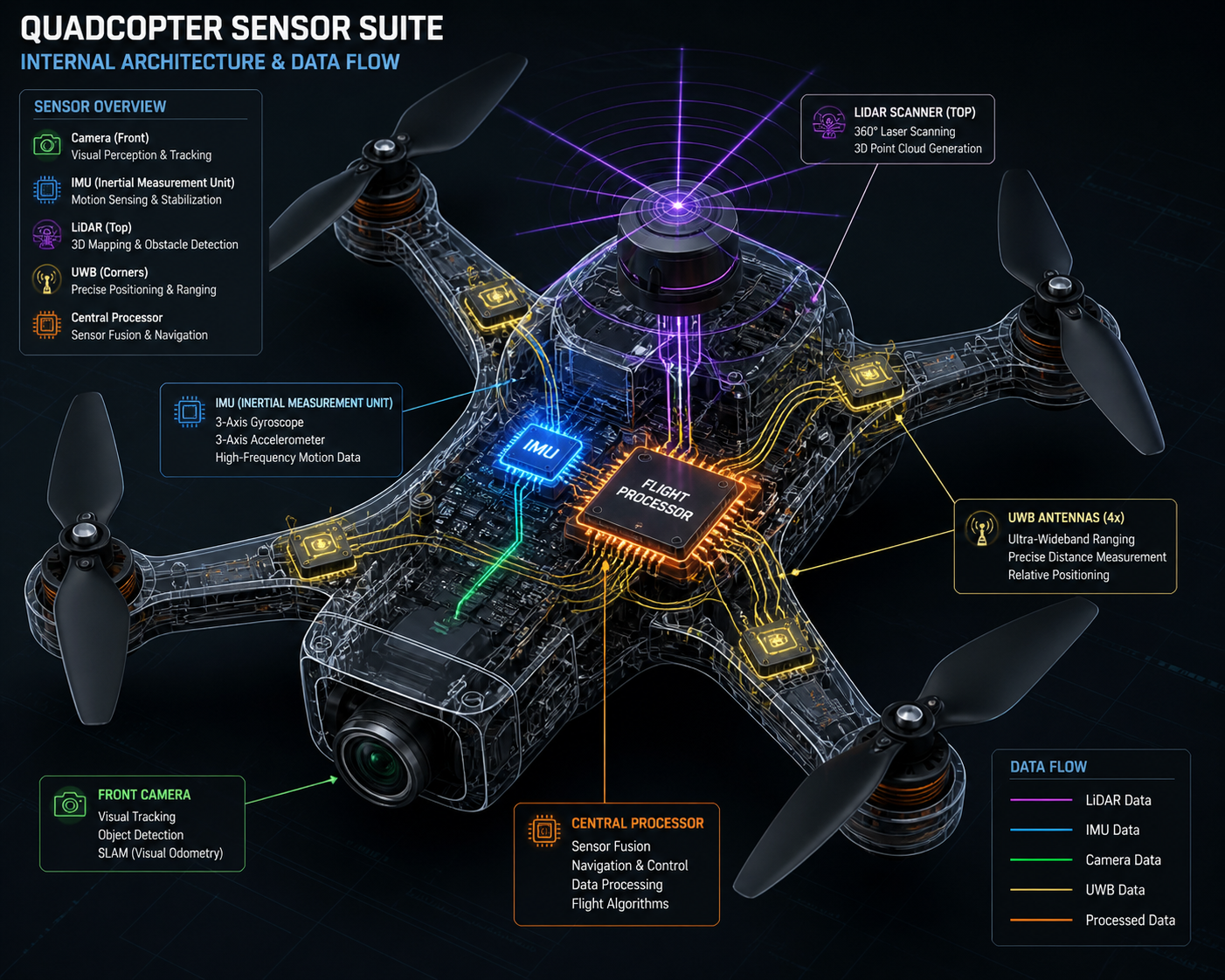

The result is a multi-layered navigation framework that combines visual-inertial odometry, ultra-wideband ranging, LiDAR mapping, and advanced sensor fusion algorithms into a unified system that achieves sub-decimeter accuracy even when GPS is completely unavailable.

The Architecture: Building on Multiple Layers of Redundancy

The fundamental insight driving our architecture is that no single sensor modality is reliable enough to trust alone. GPS taught us that lesson—it works brilliably when it works, but when it fails, you need alternatives immediately available.

Layer 1: Visual-Inertial Odometry as the Foundation

At the core of our system is a visual-inertial odometry pipeline that fuses camera images with inertial measurement unit data. Think of this as teaching the drone to navigate like a human with eyes and an inner ear.

The camera tracks visual features in the environment—corners, edges, distinctive textures. As the drone moves, these features shift position in the image frame. By tracking how features move across multiple frames, we can estimate the drone's motion through 3D space.

But cameras alone aren't enough. They struggle with motion blur, changing lighting, and featureless environments. That's where the IMU comes in. Six-axis accelerometers and gyroscopes provide high-frequency measurements of rotation and acceleration, filling in the gaps between camera frames and providing motion estimates even when visual tracking fails.

The magic happens in the fusion algorithm—an Extended Kalman Filter that combines visual measurements with IMU predictions. The IMU propagates the state estimate forward at 400 Hz, maintaining smooth, high-frequency position and velocity tracking. Camera measurements arriving at 30 Hz correct accumulated drift, providing absolute position updates that keep the estimate anchored to reality.

We use an error-state formulation that tracks small perturbations around the current estimate rather than the full state vector. This approach handles the mathematical complexities of rotating reference frames (represented as quaternions) while maintaining the computational efficiency needed for real-time execution on embedded processors.

The result? Our visual-inertial odometry achieves position drift rates under 0.5% of distance traveled in typical environments. A drone flying a 100-meter trajectory accumulates less than half a meter of error—without any external position references whatsoever.

Layer 2: Ultra-Wideband Ranging for Absolute Position

Visual-inertial odometry excels at tracking relative motion, but like all dead reckoning systems, it accumulates drift over time. Even at 0.5% drift rates, a long-duration mission eventually loses track of absolute position.

This is where ultra-wideband (UWB) radio ranging enters the picture. UWB transmitters and receivers can measure the time-of-flight of radio pulses with nanosecond precision, translating directly into centimeter-level distance measurements.

We deploy fixed UWB anchor nodes at known positions around the operational area. Each drone carries a UWB tag that exchanges ranging messages with nearby anchors. By measuring the time difference of arrival (TDOA) between signals received at multiple anchors, we can triangulate the drone's absolute position through multilateration.

The beauty of TDOA is that it doesn't require the mobile tag to know precise timing—only the differences in arrival times matter, and those are measured at the synchronized anchor nodes. This dramatically simplifies the onboard electronics and power budget.

UWB positioning provides 10-15 centimeter accuracy at update rates around 10-20 Hz. It's not as fast as the IMU or as drift-free as vision over short timescales, but it offers something neither can provide alone: bounded, absolute position estimates that prevent unlimited drift accumulation.

Layer 3: LiDAR Mapping for Environmental Understanding

Cameras and UWB tell us where we are, but they don't directly tell us where we can't go. For obstacle avoidance and terrain mapping, we integrate a 360-degree scanning LiDAR rangefinder.

The RPLIDAR A1 we selected sweeps a laser beam through a full rotation at 5.5 Hz, measuring distances out to 12 meters with 1-degree angular resolution. This generates approximately 360 range measurements per rotation, building up a dense 2D occupancy grid of the surrounding environment.

We use this occupancy grid for several purposes:

Obstacle detection identifies static and moving obstacles in the drone's flight path, triggering avoidance maneuvers before collisions can occur.

Ground plane estimation provides an alternative altitude reference when barometric pressure readings become unreliable or when operating in environments with significant vertical structure.

Loop closure detection enables the SLAM subsystem to recognize previously visited locations by matching current LiDAR scans against historical map data, triggering global optimization that eliminates accumulated drift.

The LiDAR data also feeds into our experience memory system, where we accumulate statistical patterns about environment types and their associated navigation challenges. Narrow corridors, open spaces, areas with poor visual features—the system learns which conditions favor which sensors and adapts its fusion weights accordingly.

Layer 4: Keyframe-Based SLAM for Long-Term Consistency

Visual-inertial odometry and UWB ranging handle short-to-medium duration navigation, but for extended missions we need a way to maintain global consistency and recover from complete position loss.

Our simultaneous localization and mapping (SLAM) subsystem maintains a database of keyframes—selected image frames with associated pose estimates and extracted visual features. As the drone flies, we continuously compare the current camera view against the keyframe database using ORB (Oriented FAST and Rotated BRIEF) descriptors.

When the system recognizes a previously visited location—a "loop closure"—it creates a constraint linking the current pose to the historical keyframe pose. This constraint gets added to a pose graph representing the drone's trajectory and the spatial relationships between keyframes.

Global bundle adjustment optimizes this pose graph, adjusting historical poses and landmark positions to minimize the total reprojection error across all keyframes while satisfying the loop closure constraints. We use the iSAM2 (incremental Smoothing and Mapping) algorithm for this optimization, which performs selective variable reordering and partial matrix factorization to achieve near-constant-time updates as the map grows.

The result is impressive. When a drone completes a loop and recognizes its starting location, global optimization can reduce accumulated position error by 70-80%, bringing the estimate back to within 5 centimeters of ground truth even after minutes of flying.

Layer 5: Sensor Fusion via Extended Kalman Filter

With five different sensor modalities (IMU, camera, UWB, LiDAR, barometer) all providing position-related measurements at different rates and with different error characteristics, we need a principled way to combine everything into a single, coherent state estimate.

The Extended Kalman Filter serves as our central fusion engine. It maintains a 16-dimensional state vector representing position, velocity, orientation, and sensor biases, along with a covariance matrix quantifying uncertainty in each state component.

The filter operates in a predict-update cycle:

Prediction uses IMU measurements to propagate the state forward according to kinematic equations, modeling the drone's physics. This happens at 400 Hz, matching the IMU sample rate.

Updates incorporate measurements from other sensors as they arrive. Camera feature tracks at 30 Hz, UWB ranges at 10-20 Hz, LiDAR ground plane estimates at 5 Hz. Each measurement type has a corresponding measurement model that predicts what the sensor should observe given the current state estimate. The difference between predicted and actual measurements (the innovation) drives state corrections weighted by relative uncertainties.

The genius of the Kalman filter framework is that it automatically balances trust between prediction and measurement based on their respective uncertainties. When visual tracking is confident (lots of good features, no motion blur), vision gets heavy weight. When UWB geometry is poor (drone far from anchors or in a bad GDOP configuration), ranging measurements get downweighted. The system continuously adapts to changing conditions without manual tuning.

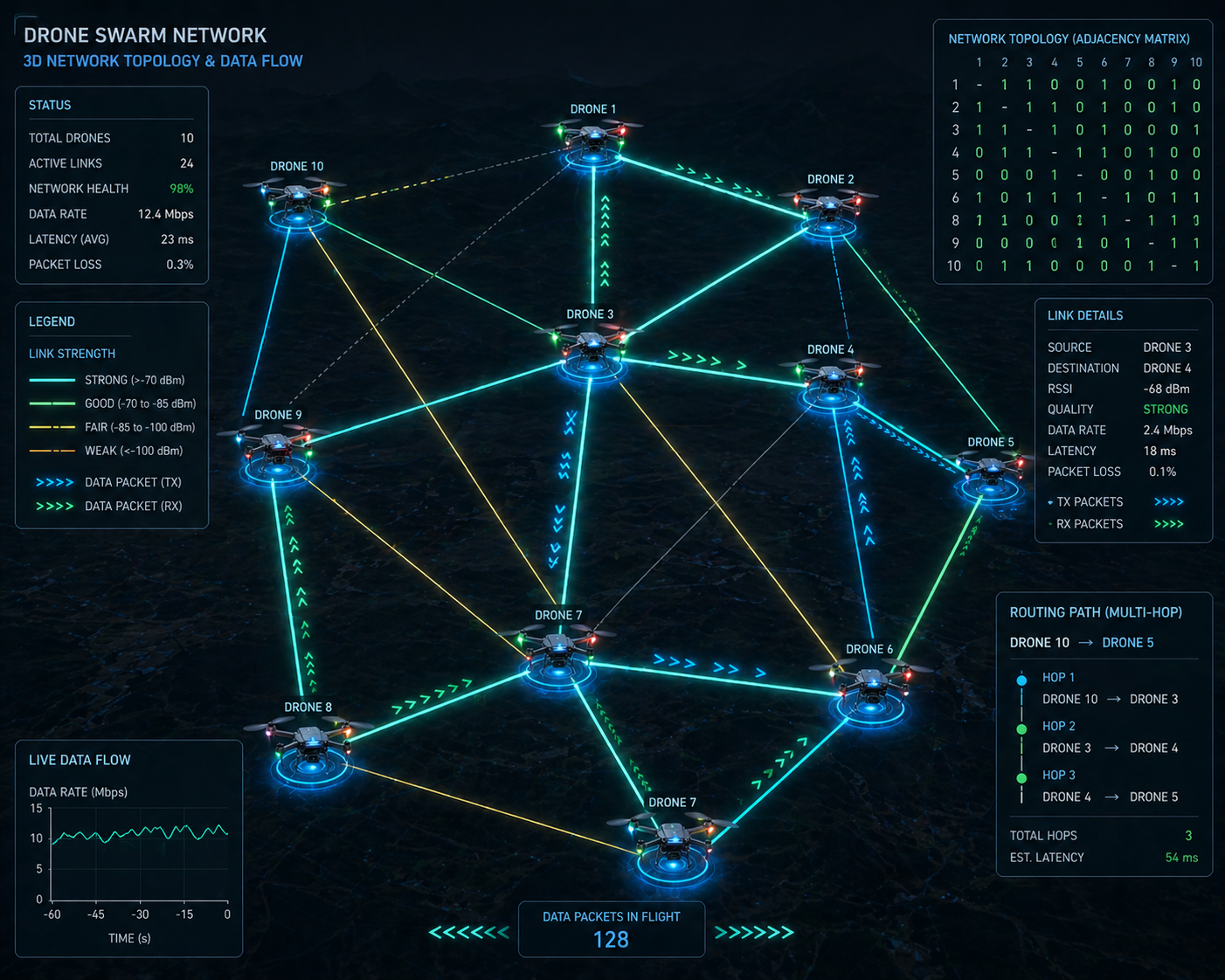

The Swarm: Coordination Without Central Control

Individual drone navigation is challenging enough, but coordinating multiple drones as a unified swarm introduces entirely new problems. Traditional approaches rely on centralized ground stations that receive telemetry from all drones, compute optimal trajectories, and transmit commands back. This works fine when communication is reliable and latency is low, but fails catastrophically when links degrade or when operation must continue despite ground station loss.

We built our swarm coordination around distributed consensus algorithms where drones make decisions locally based on information from nearby neighbors, without requiring global coordination.

V2X Mesh Networking

Our communication architecture uses Vehicle-to-Everything (V2X) mesh networking adapted for aerial mobility. Each drone broadcasts periodic beacon messages containing its position, velocity, battery status, and routing information to neighbors within radio range (typically 500-800 meters line-of-sight at 2.4 GHz).

The Optimized Link State Routing (OLSR) protocol, modified for 3D topology, handles multi-hop message forwarding. Drones maintain route tables identifying the best path to every other swarm member, updating routes preemptively based on predicted trajectory intersections to minimize link breaks during maneuvers.

We prioritize safety-critical messages (collision warnings, formation updates) over routine telemetry using weighted fair queuing. A token bucket rate limiter prevents any single drone from monopolizing channel capacity, while CSMA/CA medium access control reduces packet collisions.

The mesh scales reasonably well up to 16 drones before collision rates start degrading throughput noticeably. For larger swarms, we're exploring hierarchical cluster architectures where local cluster leaders aggregate and compress telemetry before forwarding to higher tiers.

Formation Control via Distributed Consensus

Formation maintenance uses a consensus-based control law where each drone minimizes the sum of squared deviations from desired relative positions to its neighbors:

u_i = -Σ k_ij * ((p_i - p_j) - d_ij)Here, p_i and p_j are the positions of drones i and j, d_ij is the desired displacement vector between them, and k_ij are gain coefficients encoding the communication graph topology.

This control law is beautifully simple yet powerful. Each drone only needs to know the positions of its immediate neighbors and the desired relative geometry. No centralized coordinator is required. As long as the communication graph remains connected, the swarm provably converges to the target formation.

We layer collision avoidance on top of formation control using artificial potential fields. Nearby obstacles and other drones create repulsive forces that push the control input away from collisions, while attractive forces pull toward the formation goal. The combined control law balances these competing objectives, typically achieving steady-state formation errors under 20 centimeters.

For dynamic formation reconfiguration, we generate minimum-snap polynomial trajectories that smoothly transition between formations while respecting velocity and acceleration limits. The trajectories are computed distributively—each drone plans its own path given the target formation and neighbor positions.

Leader Election and Fault Tolerance

Dynamic leader election enables swarm resilience to individual failures. We use the Raft consensus algorithm to coordinate leader selection among drones satisfying minimum health criteria: sufficient battery, good localization confidence, stable communication links to a quorum of followers.

Heartbeat messages from the leader maintain follower awareness. If heartbeats cease for a timeout period, followers transition to candidate state and initiate a new election. Strict majority voting and deterministic tie-breaking (based on drone IDs) prevent split-brain scenarios where multiple leaders emerge.

The current leader coordinates high-level mission objectives and synchronizes state updates, but followers maintain enough local autonomy to continue safe flight if leadership is lost. This graceful degradation means swarm capability scales with the number of functional members rather than failing catastrophically at the first casualty.

We also implement Byzantine fault detection where drones compare received telemetry against sensor observations when neighbors are visible. Persistent discrepancies trigger alerts and can result in temporary exclusion from consensus decisions. This combines cryptographic authentication with behavioral anomaly detection in a defense-in-depth approach.

Security: Hardening Against Adversarial Threats

Building an autonomous system without addressing security is building a remotely exploitable robot. We've seen enough demonstrations of GPS spoofing, command hijacking, and control takeover to know that security can't be an afterthought.

Cryptographic Foundations

All network communications employ mutual TLS 1.3 with X.509 certificate authentication. Both ground stations and drones present certificates signed by a private certificate authority, preventing man-in-the-middle attacks. Forward secrecy via ephemeral Diffie-Hellman key exchange ensures that compromise of long-term keys doesn't expose historical traffic.

Inter-drone mesh messages use authenticated encryption with associated data (AEAD) via AES-256-GCM or ChaCha20-Poly1305. Session keys are established through Elliptic Curve Diffie-Hellman with ephemeral key pairs signed by Ed25519 identity keys stored in secure elements.

Critical flight commands carry digital signatures computed over the command structure and timestamp using the operator's Ed25519 private key. Drones verify signatures before execution and enforce temporal validity by rejecting commands with timestamps deviating more than 30 seconds from local time, mitigating replay attacks.

Role-Based Access Control

Authorization follows a three-tier RBAC model:

Operators can issue routine flight commands (waypoint updates, formation changes) but cannot modify security configurations or approve emergency procedures.

Commanders have elevated privileges including leader election override and emergency landing authority, typically requiring dual approval from two distinct authenticated commanders.

Maintenance technicians can update firmware and modify configurations but are prohibited from issuing flight commands, preventing technician credentials from being abused for operational control.

Role assignments are encoded in X.509 certificate extensions. Both the ground control backend and individual drone policy engines validate role privileges before executing commands. This defense-in-depth ensures that even if ground station security is compromised, drones enforce an independent authorization layer.

Security State Machine

Each drone maintains a security state machine tracking trust levels:

TRUSTED: Normal operation, all systems functional

DEGRADED_LINK: Elevated packet loss or signature failures

AUTH_SUSPECT: Repeated invalid commands detected

ISOLATED_AUTONOMY: Rejecting external commands, executing safe return

State transitions are governed by counters tracking suspicious events with exponential backoff to prevent oscillation. In ISOLATED_AUTONOMY, drones execute autonomous return-to-launch if localization confidence drops or battery reaches critical reserve.

This ensures security degradation gracefully constrains capability rather than causing catastrophic failure. Operators monitor fleet security state through dashboard alerts when drones enter defensive postures.

Performance: The Numbers That Matter

Theory is elegant, but hardware doesn't care about equations—it cares about whether the system works.

Localization Accuracy

Indoor testing with OptiTrack motion capture ground truth showed:

Hybrid VIO-TDOA fusion: 8.7 cm RMSE

VIO alone: 13.4 cm RMSE

TDOA alone: 19.8 cm RMSE

Outdoor testing without motion capture:

Hybrid fusion: 15.2 cm RMSE

VIO alone: 24.1 cm RMSE

TDOA alone: 28.7 cm RMSE

Drift accumulation for pure VIO increased quadratically at ~0.08 m²/s², consistent with double-integrated IMU noise. TDOA updates every 100ms bounded drift to sub-decimeter levels even after 120 seconds without loop closures.

Loop closure detection recovered from 5-meter position errors in 1.8 seconds on average, converging to sub-decimeter accuracy within an additional 0.5 seconds.

Swarm Coordination

Formation tracking for 4-drone swarms achieved:

Square formation: 18 cm standard deviation

Line formation: 21 cm standard deviation

Wedge formation: 24 cm standard deviation

Convergence from random initial positions required 4.2 seconds average to achieve sub-meter errors. Dynamic formation transitions completed within 6.8 seconds while maintaining minimum 1.5-meter separation.

Collision avoidance succeeded in 38/40 trials (95% success rate). The two failures were attributed to simultaneous localization loss in both aircraft rather than coordination defects. Minimum observed separation during successful avoidance was 1.2 meters.

Leader election after simulated failure required 1.5 seconds for follower timeout plus 0.8 seconds for consensus completion in the 3-follower configuration.

Computational Load

On Jetson Nano 4GB platforms:

Visual feature tracking: 38% CPU

EKF propagation/updates: 22% CPU

SLAM backend optimization: 15% CPU

Communication protocols: 12% CPU

Sensors and autonomy: 13% CPU

GPU acceleration of feature detection reduced CPU load by ~40% at the cost of increased power consumption. Current configuration achieves stable 30 Hz control loops with 25% CPU headroom.

Battery life under representative loads: ~18 minutes (propulsion 65%, computation 18%, communication 12%, sensors 5%).

Lessons Learned: What We'd Do Differently

Building complex systems teaches humility. Here's what surprised us:

Visual odometry is fragile in ways you don't expect. We knew about lighting changes and motion blur, but underestimated how badly precipitation degrades performance. Water droplets on the camera lens scatter light in ways that completely break feature tracking. Now we mandate pre-flight lens cleaning and have weather thresholds for safe operation.

Time synchronization is harder than it looks. Achieving sub-microsecond TDOA synchronization over wireless networks pushed us through multiple iterations of clock discipline algorithms. Temperature-induced oscillator drift was particularly nasty—we ended up implementing continuous clock offset estimation rather than periodic calibration.

Communication bandwidth is the hidden constraint. At 8 drones, the 2.4 GHz WiFi mesh started saturating, causing packet loss that degraded formation stability. We're transitioning to hierarchical telemetry aggregation and evaluating 5 GHz/mmWave alternatives.

Security slows everything down. TLS handshakes, signature verification, certificate validation—all those cryptographic operations add latency that matters when you're trying to maintain real-time control. We've optimized hot paths and cache certificates aggressively, but security always costs performance.

Operator training takes longer than expected. The complexity of multi-mode operation, security state transitions, and degraded-system responses requires more comprehensive training than we initially budgeted. Now we emphasize simulator practice before field deployment.

What's Next: The Road Ahead

This project represents a foundation, not a destination. Key areas we're actively developing:

Learned visual features robust to illumination variations, using neural network descriptors trained on diverse lighting conditions.

Semantic scene understanding to improve place recognition and enable goal-directed exploration ("find the red door").

Predictive link quality models for proactive communication routing adaptation before links fail.

Heterogeneous swarms mixing quadrotors and fixed-wing platforms for expanded range and endurance.

Commercial deployment transition, addressing manufacturing scalability, reliability engineering, and regulatory certification.

The broader vision is autonomous systems that truly don't need human intervention to navigate complex, unknown environments. GPS was a crutch that made early drones possible but also made them fragile. By building navigation systems that work without external infrastructure, we're creating robots that can go anywhere and do anything—not just where satellites can reach.

Article Discussion

Comments and replies

Leave a Comment

Join the discussion

Article Discussion

Comments and replies

Leave a Comment